Proposed Media Schedule

The following data contains the proposed media schedule for the display ads, card decks, and direct mail campaigns. Included within the associated schedules are the cost and the total number of ad impressions.

The Process

The proposed media selection was attained by the following procedure. First, we identified our perceived Acme target audience. The target was divided into five categories such as VAR resellers, the single-user SoHo market, management, and the MIS department. CFOs are also influential since they approve the budgets, however, we assumed their influence was negligible since they would approve the overall price, not the specific application. Later, we categorized and rated these segments to determine where we felt we should concentrate.

To further identify specific segments, we compiled data from the latest issue of Applicable Magazine to identify the industries with the most target prospects. This information was taken into account during our selection process.

Analysis of Past Campaigns

We also analyzed previous Acme ad campaigns. Although there was a lot of data, most of it looked either inaccurate or incomplete. However, we were still able to compile enough information to give us helpful insights into what has and has not been working. The brief notes should be self-explanatory.

Competitive Products

With the help of Adscope, we were able to compile the past 12-month history of our competitor’s campaigns. Although there appear to be a few missing publications with the associated missing data, there is still enough to see the competitive trends. It is interesting to note that the larger ACE-specific vendors did not advertise for the entire year. It is also interesting to see where different competitors felt the market was addressed.

Viewing this competitive data allows us to consider either a blocking campaign where we match competitors or a separatist campaign where we fish in a different pond entirely–or, a mix of the two. The data also allows us to see how aggressive the competition is and where they are concentrating their efforts. It is noteworthy to see the efforts used by Misc. Software to initiate their branding campaign.

Additional competitive information can be gleaned from the full Adscope report that is available upon request.

Filtered & Rated Media Selection

We compiled our original media list from the PR department and Bacon’s Media book. We then pared down the list to identify the ten or so major players within each of our selected categories. We collected media kits, prices, and dates from publications on this list. Later, we rated the publications from 1-5 and dropped the lesser entries. We also rated the categories to determine our best-perceived targets. The list that is included is a fourth-generation directory that posts the results of the rating process. It was from this list that the final media schedule was created.

Database Modeling

It is important to note that the proposed media schedule could be based on inaccurate assumptions about our market based on our own preconception of our typical installed user base. This provokes caution since we don’t even know the average age, income level, or type of publications typically read by our installed base. We have some information within our registration cards but it is neither detailed nor accessible enough. As such, we may wish to do a database modeling study to determine our exact look-a-like customer. We can still make several assumptions without this data but it deserves consideration as we proceed further. A typical study can be conducted for approx. $8,000.

Display Ads

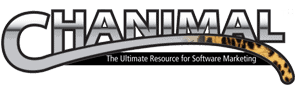

We drafted the current Media Schedule from the filtered and rated media list. Within the display ad section, the committed ad space is shaded dark, the recommended test period for the respective publication is shown in medium gray, and the proposed full-term schedule is highlighted in light gray. The rates represent the best information at the time of this proposal and may be improved by the time of placement.

Within early negotiations, we have already asserted the need for the right page placement in either a set location or alongside the preselected editorial sections. Along with placement and price, we have also requested and received first draft proposals that have included free use of names and specific market research—valuable as we initiate future direct mail campaigns.

The schedule peaks at over 1.5 million impressions corresponding to when Widget 2.2 is most likely to be released. Of course, this schedule can be altered according to the actual release date. The budget amount is based on last year’s historical actuals for the complete year. This amount can also be altered according to the desired product and company recognition. The media placement curve shows that we are increasing the number of ad impressions considerably during the time of the product release followed by a semi-maintenance schedule.

This bell curve campaign is designed to initiate awareness and create the maximum amount of noise as the new product, in a new category, hits the market. It is also designed to make the optimal splash with a smaller budget. Of course, the ad campaign is most effective when executed simultaneously with Acme’s PR, channel, and online Internet efforts.

Graphs are also included which are especially helpful for Inside Sales to picture and plan for the possible lead workload.

Success Indicators and Tracking Methods

One of the most complex tasks of advertising is to properly measure the effectiveness of various campaigns. This is determined first by deciding what constitutes a successful campaign and then tracking the associated variables.

Two barometers are considered when evaluating the effectiveness of advertising, one is the increase in product and company awareness, and the other is the Advertising Effort Response Elasticity (AERE), which is the relationship between the percentage change in advertising expenditures and the percentage change in sales.

Pre and Post-tests with focus groups, consumer juries, and phone surveys conducting recall tests are methods to determine product, brand, and company awareness. Excellent tracking processes are the only way to measure the AERE.

Although both barometers are important, direct trackable sales are the prevailing emphasis at Acme Software. The caution in using direct response sales volume as the criterion for judging advertising effectiveness, however, is that it doesn’t account for the other promotion and marketing mix elements–which are taking effect simultaneously. Furthermore, many types of advertising do not produce immediate direct response sales results (i.e., those ads that are to increase credibility without a direct call to action).

Our campaigns and media schedule are designed to produce as immediate results as possible, except our ACE high-end ad–which does not have a direct call to action (for information but not for sales) since it is corporate-focused and corporate would not be purchasing single units. In addition, the sales cycle for corporate sales is expected to be much longer which makes it more difficult to measure the effectiveness of this campaign empirically without a complete reseller lead tracking system (we are putting it in place but don’t yet know how effective it will be).

With this said, Acme Software is running several different types of campaigns (direct mail, display ads, and card decks) and each one will be measured and tracked differently.

Direct Mail

Unlike display advertising, the effectiveness of the direct mail campaign is very simple to measure. With direct mail, we typically expect to close between 1/3-2% of the total mailing for the prospect piece and 2-7% (unknown since it hasn’t been measured before) from the upgrade piece. If the results lie anywhere within this range, or, because of the higher margins the piece still pays for itself, we will consider it a success by industry standards.

At our projected cost, a 1% response should be the break-even point and would cover the cost of production, design, shipping, etc. Even with just a 1% response, the campaign would be considered a success since we got all of our money out of it, and created an ad impression among the other 99-93% that should bring in the “halo” sales. It is a combination of these repeated halo impressions that creates the overall awareness and branding that lead people to seek us out and drive direct and reseller sales.

Card Decks

Card decks, like direct mail, are also easy to track and measure. When the card decks hit they generate direct sales that we can easily attribute to the card deck. They also generate a halo effect that creates a similar response to direct mail. The expected return from a card deck is 1/2-2%.

Display Advertising

Direct response display advertising, like card decks and direct mail, would normally get between 1/3-2% response. Products such as Animal would usually be on the lower end of the return (1/2-1/3%) because of the higher price but the higher-than-normal direct margins would typically compensate enough to generate a break-even response. For last year’s campaign, Wiget1 generated about a 96% return which meant that the total amount that it cost in actual dollars was a little over $9,000, or approx.. .01% of the total budget.

The industry average for direct response ads is a 20-50% trackable ROI for a successful campaign. According to Jeff Wiss, Co-founder of Launch Strategies, a consulting firm for several large software companies that have been tracking ad campaigns for the last seven years, “a campaign is highly successful if it pays for 65-70% of its cost.”

By industry standards, last year’s campaign, at 96% was very successful –even after considering the opportunity cost of our expenditures. If we can achieve similar results with our standard Widget1 product, and get back what we invested, plus increase branding and awareness, we will consider this year’s campaign to be just as effective. The only ad campaign that may have to be tracked non-empirical, as mentioned earlier, is the Widget 2.2 campaign, and we will be watching this campaign very carefully to pick up on any early indicators and adjust the campaign accordingly.

Media Schedule Display Ads

Following is a six-month mockup of a media schedule covering five publications–the prices are fictitious & the new product hits in March. The actual media schedule would also include page size & editorial info.

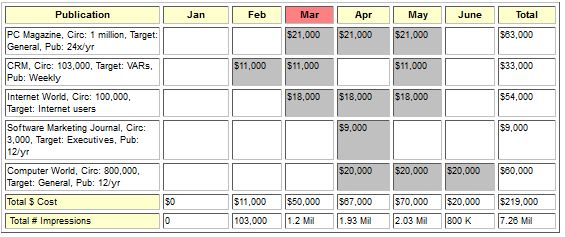

Media Schedule Card Decks

Following is a six-month mockup of a media schedule covering five direct response card decks. Card decks are typically available in standard (3×5) and jumbo (4×6) sizes. Although more visible with more space, Jumbo’s typically only pulls about 10% more, yet costs 33% more. You can typically negotiate for placement (i.e., on the cover, top of the deck, etc.). However, I don’t know if placement is as critical with card decks as it is in magazine ad placement since folks already know that they are reading the advertisement and are just looking for whatever catches their eye.

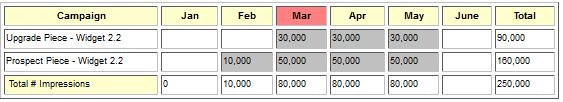

Media Schedule – Direct Mail

Following is a six-month mockup of a direct mail campaign. Direct mail is a highly specialized field where empirical data talks, and assumptions walk. Several direct mail tips should help increase response. Direct fax is a good way to test your premium offer before your actual campaign. Otherwise, various offers and designs should be created, and tested, and the most successful should be adopted. This schedule shows a staggered drop so fulfillment doesn’t become overwhelming to the inside sales team.

Media Schedule – Graphical

This graph shows the kind of groundswell that should occur during a product launch. It demonstrates several primary demand vehicles (display ads, card decks, and direct mail) that can be combined to create the necessary product awareness.